Getting an ML model into production is a hard nut to crack. According to Chris Chapo, SVP of Data and Analytics at Gap, 87% of the models fail before getting deployed. Even if you are in the lucky 13%, this is when the real hard work begins.

The cycle of constant monitoring and maintaining the model is called post-deployment data science. It's a crucial step since our model is live and embedded in business processes, and every mistake can cost us a lot.

But don't worry. In the following sections, we will break down the three most common failure modes and show how to spot them with NannyML. So grab a cup of coffee, sit back, and dive in.

What are the typical causes of ML model failure?

Performance degradation

The moment you put a model in production, it starts degrading.

When evaluating the ML model, there are many factors to consider - from bias and fairness to business impact. But the most critical dimension to evaluate is its performance. After all, this is what the ML model is specifically optimized for.

A model’s performance is often measured using metrics like ROC AUC, accuracy, and mean absolute error. A steady and persistent decrease in these numbers is called performance degradation.

The main reason for performance degradation is simple: the world is constantly evolving, thus the patterns in our data. The observed patterns and assumptions made in the past often do not hold in production anymore. Since ML models are trained on historical data, they tend to become outdated after some time.

The dynamics of those deteriorations can be different. To formalize it, we split them into three types:

1. Sudden performance degradation

An unforeseen drop in performance.

For example, to attract more people to a new country, Netflix gives a free first-month subscription. It brings a large number of new customers with different characteristics than historical ones, resulting in input data drift.

This can lead to a sudden drop in performance, as the model can no longer accurately predict which customers are likely to churn.

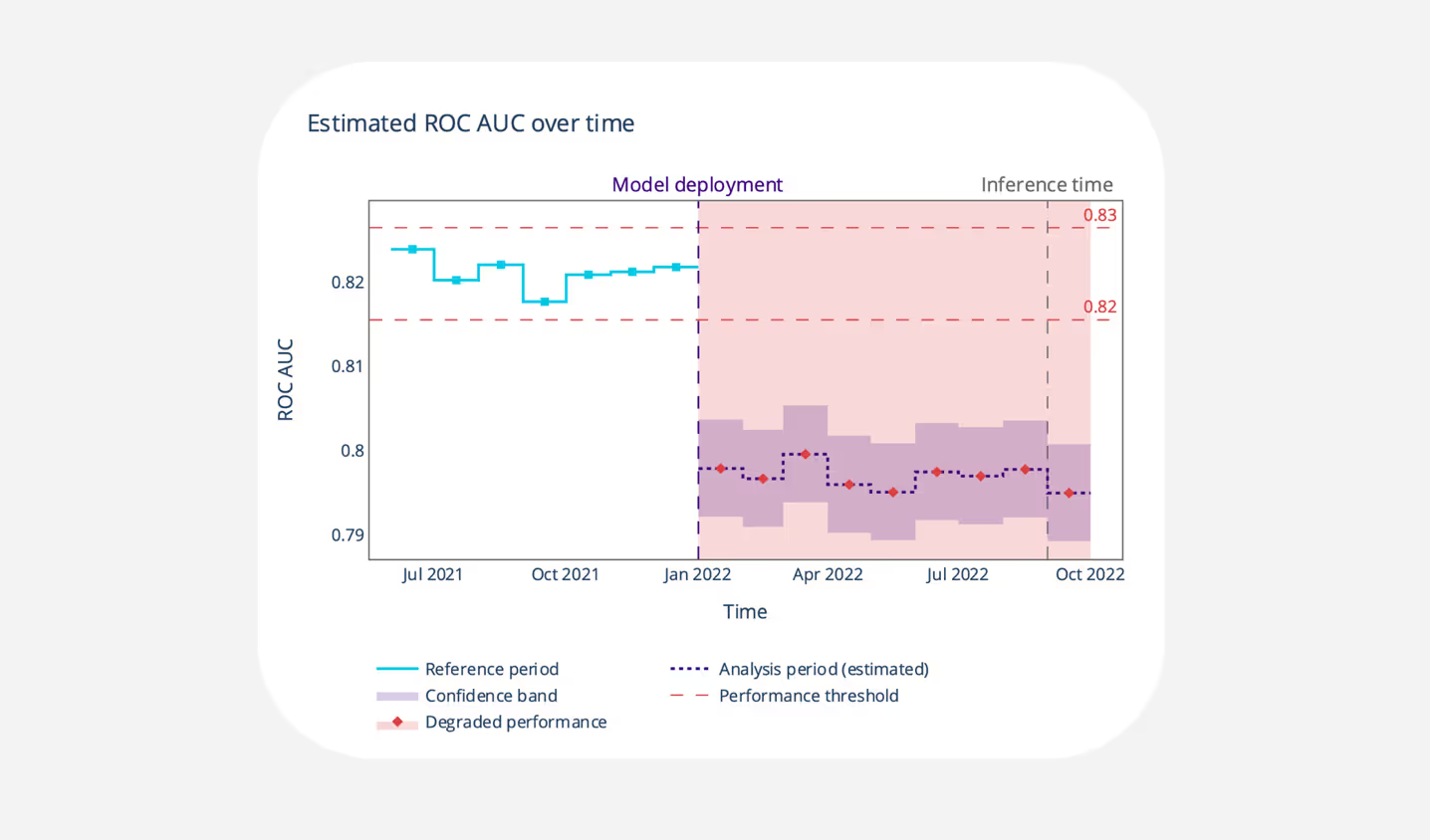

The graph shows the model’s performance before (reference period) and after (analysis period) the deployment. The performance is estimated because it is calculated in the absence of the ground truth.

The ROC AUC remained stable from January to July and suddenly dropped below the threshold in the following four months. This change could indicate Netflix's entry into the new country mentioned earlier.

2. Gradual performance degradation

The performance gradually decreases over time.

Imagine a spam detection model trained on the data with emails from a few months ago. The model is performing well, and it is deployed in production.

As time goes on, the underlying patterns that the model observed about spam emails change. For example, spammers begin using new tactics, such as sending emails from different domains or using different types of content. These changes would cause the model's performance to decrease over time because it's unable to adapt to the new patterns in the input data. This phenomenon is also known as concept drift.

The estimated ROC AUC is gradually decreasing each month, as expected. From June to October, spammers could be way ahead of the model, and we can see alerts about the performance dropping below the threshold. It is a good time to take a step back and possibly retrain the model.

3. Other types of performance degradation

E.g., recurring, cyclical.

The fluctuation of performance may be caused by the dynamic nature of the underlying problem, for example, stock price prediction. The stock price is affected by diverse factors like news, economic events, or company earnings. These events can change rapidly. As a result, the model's performance may go up or down depending on how well it is able to adapt to these changes.

Downstream business failure

A successful ML model is only as good as the system in which it operates.

Machine Learning models are an integral component of business processes. Their predictions help to improve decision-making, but they are never the endpoint. Therefore, the business's success depends on all system parts working together seamlessly.

Sadly, this is not always the case: even if our model works well, some downstream processes can fail.

Let's get back to a customer churn example, having a model with the technical performance on point. Imagine a situation where a new manager changes the retention method from calling to emailing the customer with a high probability of leaving.

Later, it turned out that emailing was less persuasive and effective than a personal call. As a result, some customers cancel their subscriptions, directly affecting the company's key performance indicators (KPIs) like revenue or customer lifetime value. The retention department, unaware of the new business decision, can bang on the door of the data science team and blame their model for the failure.

Constant monitoring helps to make sure that our model is performing well regarding our business goals. This way, as a data science team, we can address and proactively identify those problems. Instead of scrambling to explain why they didn't hit their targets, we can help to find the root cause of the problem and work together to resolve it.

Training-serving skew

Imagine putting in a lot of work to carefully select, prepare and deploy a machine learning model for production, only to discover that it is not performing as well as it did during testing. This frustrating experience, also known as training-serving skew, refers to the discrepancy in performance between the training and the production.

The main reasons for the skew are:

Data leakage

A leak of information about the target variable into the training set.

It's a cardinal sin in data science. The most illustrative example is when dealing with time-series data collected over a few years. The problem arises when the dataset is randomly shuffled before the test set is created. It can lead to the unfortunate situation of trying to predict the past from the future. As a result, we overestimate the performance of our model.

Overfitting

The inability to generalize well on unseen data.

By far, it's the most common problem in applied machine learning. It happens when the model is too complex and memorizes the training data. As a result, the model performs great on the training set but not on the new data.

Discrepancy in handling training and production data

A difference in the way the data is processed, transformed, or manipulated between training and production.

A common practice in feature engineering is having separate codebases for training and production. The difference in the frameworks may result in different outputs to the same input data, causing the skew.

The graph above represents the picture-perfect training-serving skew. The model's performance during the reference period looks promising, leading us to believe it's ready for deployment. And then, the reality hits us hard as the estimated ROC AUC falls below the threshold.

In some cases, it can be an immediate red flag (f.e, the model is overfitting) to launch a further investigation. But as we see in the example above, the model was kept alive for quite some time (10 months). If the predictions are valuable from the business point of view, the model can keep running until we find a better solution.

Nevertheless, monitoring is essential in both cases, giving us more understanding and control over the model.

Conclusions

Babysitting a Machine Learning model after the deployment is necessary to keep its business value. Sometimes things can go wrong, like a drop in performance, failure of downstream processes, or differences between how the model was trained and how it's used in production. But now, since you're aware of these potential issues, you can be more proactive in finding and resolving them with NannyML.

If you want to learn more about how to use NannyML in production, check out our other docs and blogs!

Also, if you are more into video content, we recently published some YouTube tutorials!

Lastly, we are fully open-source, so don't forget to star us on Github!⭐

.avif)