Do not index

Canonical URL

Imagine you’re hiring a new employee. You put in the effort, time and money to find the perfect candidate, test them, onboard, and extensively train them. After all that’s been done, you never evaluate their performance ever again.

Wait, what? That’s insane! What if they somehow fixate on the wrong thing? Or are faced with new challenges they are not prepared for at all? What if I told you that most enterprises do something very similar? The only difference is that the new employee is not a person. It’s a Machine Learning model.

Risks of Operational AI

Countless enterprise organizations across industries have adopted and deployed Machine Learning systems to make business-critical decisions in a full range of departments. However, once Machine Learning systems are successfully deployed, that’s the end of the line. Neither business nor engineering stakeholders focus on ensuring that these systems continue to provide value. And while many may think it’s “not such a big deal” I can assure you, there are consequences of not monitoring your models in production.

In 2018 Amazon scrapped its AI recruitment tool, after discovering their recruitment AI was sexist after only selecting male candidates to move forward with the hiring process. Improper evaluation of risk models played a significant role in the 2008 financial crash. Lack of evaluation and business impact estimation of criminology software meant that the lives of multiple prisoners were affected by improperly mandated prison sentences.

Now, seeing how disastrous the results of not monitoring your ML systems have been, let’s take a look at what monitoring of AI is. In this blog series. we’ll discuss why monitoring AI systems is essential, how to do it correctly, and highlight the most important challenges associated with it.

AI Monitoring 101

Let’s start with the simplest case: a traditional, supervised learning system without online learning or any other fancy gimmicks. Just good old AI that brings actual value to the table. Without spending too much time doing a deep dive into the fringe cases, our aim will be to cover the basics of monitoring.

Elements of a Machine Learning System

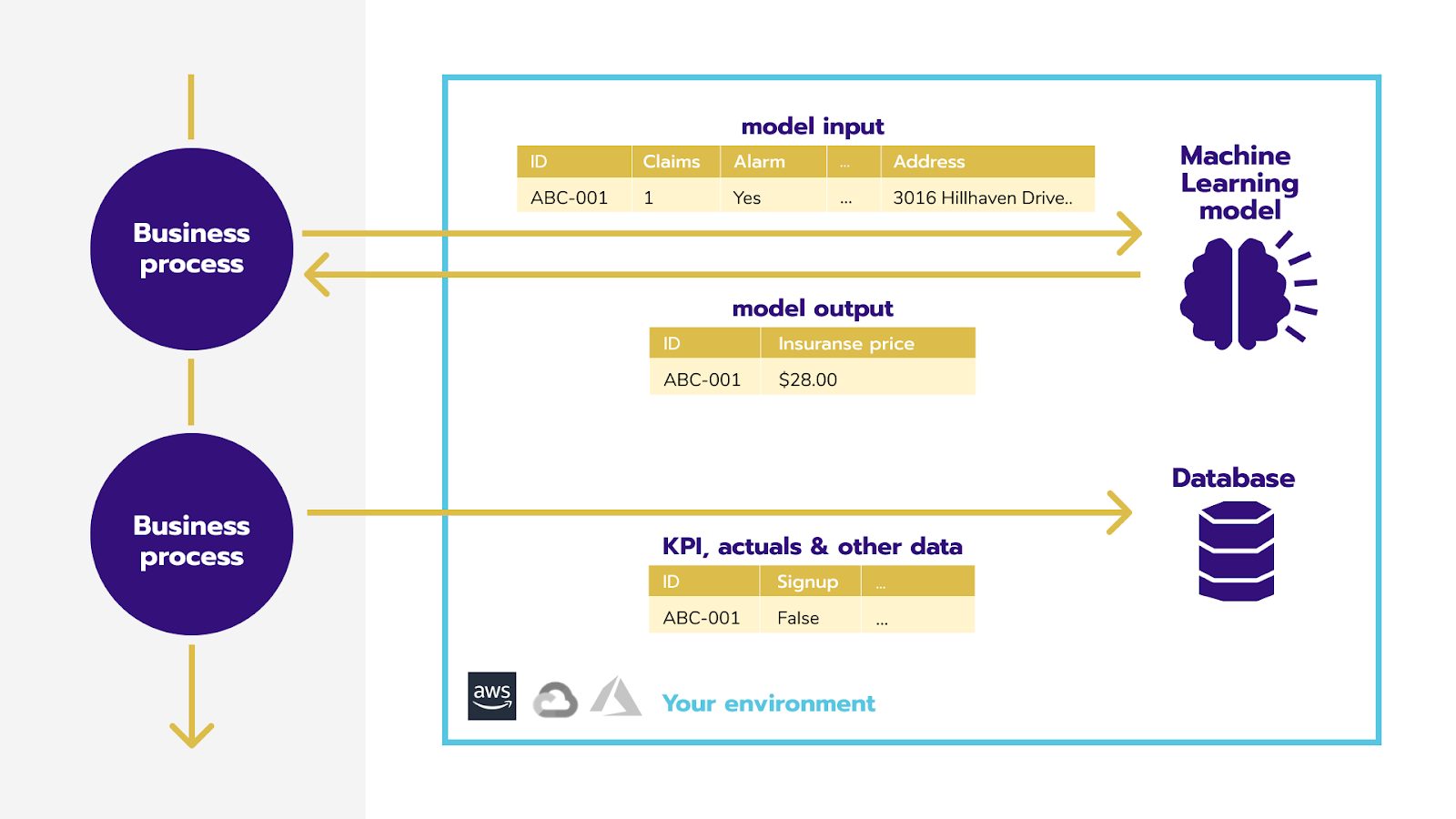

Let’s take a look at a typical ML system with something like personalized insurance pricing. This process consists of finding the best insurance price for every applicant (business process) based on the following:

- Data about this person (input data)

- Machine learning model to predict price

- The price itself (model output)

- Any data we might be able to gather later, like the ROI on a policy, called downstream data.

Now, if we simply monitor the data for applicants and prices given by the model, and combine it with downstream data about the quality of predictions (if we have them), we can approximate the model itself, giving us an overview of every part of the system. This overview will highlight any irregularities and take appropriate action. This could be anything from retraining the model, collecting more data, adjusting the technical KPIs to better reflect business objectives, or even stopping the model and launching a deeper investigation. We’ll cover what problems might arise in a machine learning system, how to spot them, and how to resolve them in another post.

Data Drift

The easiest thing to monitor is what gets fed to the AI, aka the model input. The change in that input is called data drift. An example of data drift is customers slowly getting older in your churn ML system, or a sudden spike in loan applications from people in a certain town in your credit loan default system.

Looking at it more formally, a data drift occurs when the distribution of model inputs changes over time, in a significant way. How do we know if a change is significant? A change is significant if it may adversely affect the quality of some models. We’ll expand on this in another post. Using typical notation it’s the change in P(X).

Data drift happens when the population that you’re working with changes. This might be a change that’s caused by external factors. But it could also be caused directly by the model being in production; we affect the population by using AI on it — this is an important issue we should keep in mind.

Important to note: Data drift does NOT always decrease the business impact of the deployed model. But even data drift that doesn’t hurt the model needs to be monitored, regardless. Why? We will discuss it in a subsequent article too, so stay tuned!

Concept Drift

In most use cases, the goal of supervised machine learning is to identify the patterns between model inputs and model outputs. This will help us create a mapping that takes into account everything we feed into the model, and spits out what we’re trying to predict. Using the insurance pricing example, the goal of that model is to find patterns between data about an applicant, and optimal insurance policy price.

However, that mapping tends to change over time, and the relationships between applicant data and optimal policy price are not constant. (E.g. when burglaries are on the rise in a region, the optimal price for theft insurance might increase across the board to take into account increased risk.) This change in the mapping is called concept drift. Using typical notation, it’s the change in P(y|X). Concept drift almost always reduces business impact. If the mapping changes between inputs and outputs, old mapping identified by the algorithm is unlikely to work well with the new subpopulation.

Business Impact

The business impact of the AI system is the last thing we have to monitor. It’s a relatively straightforward process if we can compare our predictions with reality. If we’re predicting demand for a product a few days in advance, we can simply compare the predictions (model output) against the real demand (ground truth), and monitor both technical and business KPIs based on that. Interestingly enough, the correlation between business KPIs and technical KPIs might weaken over time, to the point that using the technical metric to estimate the impact no longer makes sense. This, however, is a longer topic, which we’ll explore later on.

In most cases, we can’t compare predictions with reality, as they are either not available at all, or significantly delayed. When we use an AI system to set insurance policy prices, having human analysts verify every prediction wouldn’t be feasible and would defeat the point of using an automated system. In such cases monitoring business impact gets tricky. The best we can do is to estimate using past relationships (mapping) between the impact, data, and concept drift. And as you might’ve guessed, we can do it using Machine Learning. Again, more on this later.

Putting it All Together

We discussed concept drift, data drift, and how that might affect business impact. When we monitor all the data coming in and out of an AI system, we can almost immediately spot any irregularities. This gives us the possibility to say, “Hold up, wait a minute” and take a closer look to understand better something that may have gone wrong. Not only does this help build a better AI system, but it puts you back in control of the entirety of your operational ML.

What’s Next?

We discussed monitoring ML systems is necessary, and what it is, and gave a general overview of how to do it. But is that enough to fully trust your AI? Not fully. For that, we also need a way to identify the underlying causes of the issue and find a way to address it. And that’s what the next post is going to be about!

Have you experienced data or concept drift? Let’s get in touch!

Model Monitoring 101: First Step to Observability