It is another calm day at the office. You are happy because last month, you deployed another successful ML model to production. This one achieved a +90% accuracy on the test set! Nothing can go wrong.

Suddenly, while dreaming about your next raise and promotion, you start getting emails from your ML monitoring system alerting you about a drop in performance from your model.

What do you do?

Understanding the Performance Issue

Thankfully your company monitors its models constantly. So you catch the performance issue on time before it drops even more and potentially impacts the business.

If you used NannyML to monitor your models, after getting the performance drop alert, you would see something like this in your monitoring dashboard.

.avif)

The Mean Absolute Percentage Error (MAPE) increased over the performance threshold, meaning the production model is underperforming.

To understand why this is happening, you do a root cause analysis. You look for signs of univariate or multivariate drift in the production data. After playing the role of Sherlock in this investigation, you finally find two relevant features drifting.

.avif)

.avif)

To know more about data drift and how to detect it with NannyML look at Univariate Drift Detection Documentation.

After all, it wasn’t such a calm day at the office; you tap yourself on the shoulder for a good job finding out the underlying issue. But now that the problem is clear, how can we fix it?

Let’s find out what cards you can play to fight the data drift that is causing the model degradation issue. We’ll see that there’s no unique solution that fits every problem. Sadly, there is no “free lunch”. We’ll learn the pros and cons of each technique as well as when to apply one or the other.

6 ways to address data distribution shifts

1. Do Nothing

More often than not, models are left to deteriorate in production. It is not always a conscious decision. But in some cases, it makes sense. By having a good monitoring system, you can do an opportunity cost analysis and decide if it is worth it to revamp the current model.

Example when it would work ✅

Let’s say a call center has a forecasting model that estimates how many people will call for support in a day.

After a while, the model starts underperforming, and it’s frequently overestimating the number of calls. In this case, we could ignore the drop in performance since we know there will be enough agents to answer all the calls.

Since service level is more important than the costs, we can let the model deteriorate for a few months before we tackle it.

Example when it wouldn’t work ❌

A real-estate company whose main business is buying houses for a fair price and performing redecorations to later sell them at a higher price.

On one side, if the model overestimates the price, we increase the chances of buying the house. But we end up reducing the profits that we get in return. Meaning that the model’s output has a strong influence on the company’s profit.

If this model is not tuned and monitored correctly, the main business could get heavily affected. In this case, doing nothing when noticing an ML model performance drop shouldn’t be an option.

In general, taking this action makes sense:

- When the company’s business doesn’t heavily depend on the model’s output

- When the model performance drop is not too abrupt

- When the data science team is already working on a better version of the model

When taken, this action is often a good idea to bring the business team in the loop and give them visibility of the issue.

2. Inducing the new drift to the old data

This is an active research area. The idea is to use the old data and artificially induce the new observed drift. Probably the most common example of introducing drift to old data is performing data augmentation when working with images and audio signals.

Example when it would work ✅

A baby monitor device identifies when a baby is crying and alerts the parents.

Recently the company has received many complaints about the monitor not working properly. Parents are saying they receive too many false alerts. While addressing the complaints, the company noticed that in most cases, when the model gave a false alert, there was background noise from another device (TV, smartphone, etc.) or outside the room. If we think so, a kid crying on TV or an ambulance siren passing by doesn’t sound as different from a real child crying.

Real audio signals can contain background noise, echo, and other phenomena not present in the training data. To solve this, the company could induce the drift by applying data augmentation techniques to the training data set.

Example when it wouldn’t work ❌

When we don’t fully understand the reason for the drift, or the drift is complex to model.

An energy company has a model that forecasts the demand for its services. Recently, the model has not been performing well due to a change in the number of kilowatts consumed in a specific city. To fix this, the company may be tempted to apply this shift to similar regions, but this would likely result in poor performance across the board.

Cons

- You need high domain knowledge to be able to effectively model the new drift and apply it to the old data.

- This technique is not widely adopted in the industry since it is still an active research area.

Examples of this method

- Domain Adaptation under Target and Conditional Shift. Zhang et al. (2013)

- On Learning Invariant Representations for Domain Adaptation. Zhao et al. (2019)

3. Retraining your model

This is probably the most popular technique (after doing nothing). It may sound straightforward, but it shouldn’t be applied blindly. There are different ways to do it and important things to consider. We need to decide on which data to train the model and how to do it.

3.1 Train on both old and new data

This is particularly useful when we see a drop in performance because a big percentage of the production data moves to regions where the training data was not very well represented.

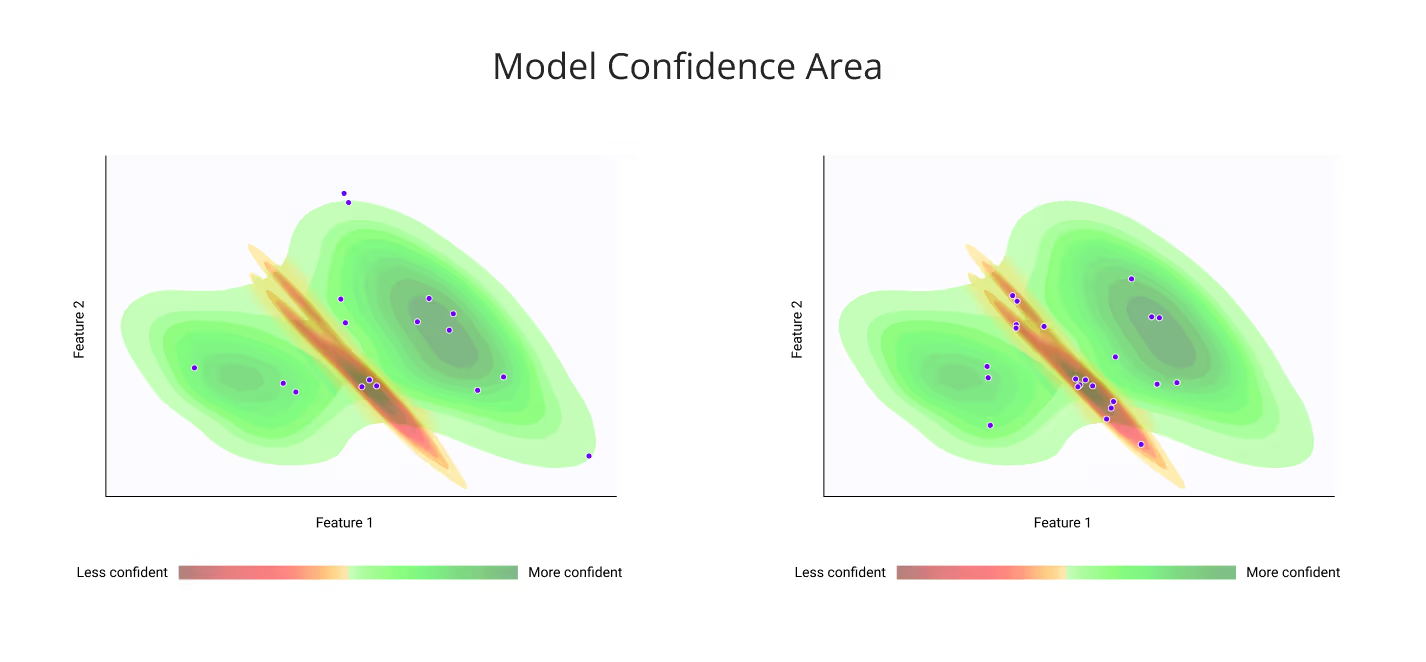

For example, in the image below, we see how initially (left picture) only a small portion of the production data (purple dots) are coming from the less confident area (red-ish region). But after a while (right picture) more and more examples start coming from the less confident area. This behavior will probably translate into a drop in the ML model’s performance.

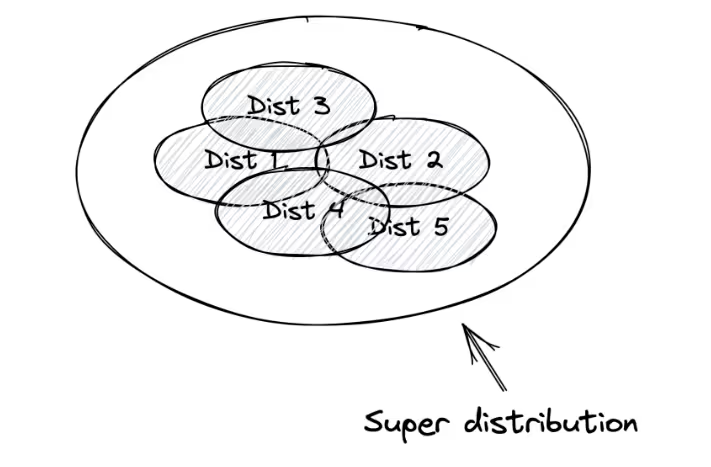

One method to fix this is to collect more labeled data and train the model on both old and new data. The idea behind this is to capture as many possible distributions in a dataset and build a model complex enough that can learn them. So anything that happens during production time, the model has probably already seen something “similar”.

Example when it would work ✅

Recently a supermarket implemented an ML model to identify fruits and vegetables. So when customers buy them, the cashier doesn’t have to manually introduce a code before weighing it because the computer vision model recognizes the item.

When deployed to production, the supermarket noticed that the sales of carrots 🥕 increased at unusual magnitudes. After noticing the issue, they found out that when the cashiers hold the items in front of the computer vision system, the model often miss-classified their fingers as carrots. One way to deal with this is to train the model not only on vegetable images alone but on vegetable images being held by someone.

Example when it wouldn’t work ❌

A bank uses an ML model to make loan default prediction. Let’s say the model starts to underperform due to a drift in the recent production data. We may think about retraining the model with old and just acquired data to solve the issue.

But unfortunately, it is not that simple. To be able to use today’s data as training data, we would need to wait at least a month. Why? Because it is until then that we know if a customer is going to default or not on their loan.

In this example, we are seeing the performance drop and the drift happening, but we don’t have the targets yet. We would need to wait for the labels before we retrain the model with data coming from the problematic distribution.

In general, taking this action makes sense when we are observing a data drift but we know that our previous distribution still holds.

We should be careful when there is an indicator of concept drift. Trying to learn both concepts at the same time would lead to worse performance.

Cons

- We need ground truth for this. Getting more labeled data is often a long and expensive task.

- You will probably need to start the training process from scratch.

3.2 Fine-tune the old model with the new data

This is a popular technique when we are working with neural networks and or gradient boosting methods such as LightGBM where we can refit the model with newly acquired data while preserving the old model’s structure. Instead of training the model from scratch every time we collect new data, we fine-tune the old model with the new data.

Example when it would work ✅

A company launched an app to help people take notes on their tablets. Internally the app runs an ML model to recognize handwritten characters and help users do a text search across their notes.

The company notices that the more people interact with the app, the worse the ML model becomes. After a careful examination, the company realizes that after a while of writing in the app, the users start getting more comfortable and their handwriting becomes more clumsy and less readable.

In this case, the company could fine-tune their previous model with new labeled data containing a diversity of examples showing readable, and less readable characters coming from different handwriting styles.

Example when it wouldn’t work ❌

Let’s revisit the example of the bank that uses an ML model to make loan default prediction. But this time, we are aware that to get new train data we need to wait at least a month until we know the realized label. So, we wait. After getting everything that we need, we proceed to fine-tune an old model with new labeled data.

Can you spot the subtle mistake here?

The old model was probably trained months or years ago. But the loan default problem is tricky. The data is not static, an old customer’s label (default or not default) can change any month. So we need to retroactively update historical labels and by only fine-tuning an old model, we can’t do that.

In this particular case, we would need to wait until we get new labeled data, update historical labels and then retrain a model from scratch.

In general taking, this action makes sense when your previous model still works for most of the data and you don’t want to train a new one from scratch. And it is not recommended when there is a significant change in the underlying distribution of the data, and the old distribution is no longer valid to solve the problem.

Additionally, you should decide which data to use to fine-tune your model. Data from last month, quarter, or year? Or data collected after the last time you fine-tuned? Or data from when the shift started to happen?

The best method to decide this is to experiment with different conditions and analyze the results.

3.3 Weighing Data

The idea of this method is to give more importance to recent data. If the new data is more relevant to the business problem we can weigh the recent data in a way that the model gives more importance to it.

Example when it would work ✅

An airline that forecasts how many flights are going to occur in the following days. During the COVID-19 pandemic consumer behaviors changed drastically and fewer people were buying flight tickets. The airline could give bigger weights to more recent transactions so the model could learn the change.

Example when it wouldn’t work ❌

This method doesn’t make sense when old and new data are equally important to solve a problem.

A company developing an app that recognizes handwriting text would treat with the same importance old and newly acquired examples. Their goal is to be able to recognize handwriting characters when they are written perfectly or clumsy. Every example shares the same importance.

This action is often taken when there are indications of concept drift but the old data can still bring value. Additionally, you may want to re-evaluate what features to use, which type of model to apply what performance metric to track, and build a more relevant test set with a big portion of recent data.

4. Reverting back to a previous model

Maybe you previously had a model that was working perfectly and after the last update, the performance started to decay. In this case, it would be worthwhile to bring back the old model and run an analysis on the new one since it may be failing because of human errors during training such as data leakage.

5. Change Business Process

We could deal with the issues downstream, change the business rules and or run manual analysis on predictions coming from the drifting distributions.

Example when it would work ✅

A supermarket chain uses a forecasting tool to estimate how many units of each product are going to be sold next week. This helps the branch managers know how much to order from their suppliers.

Recently the model has been underperforming for toilet paper and cleaning products. In this case, we could notify the branch managers to use their own judgment when placing orders for these products. And do a manual overwrite.

Example when it wouldn’t work ❌

When we require a model to operate in a fully automated system. For example, an autonomous-driven system should be trained with a massive dataset containing edge cases for every situation.

6. Refactoring the use case

Sometimes, going back to the sketch board and revisiting which features to use, what feature engineering techniques to apply, and what type of model to train can help us to build more robust features and prevent future drifts.

As mentioned by Chip Huyen in the Stanford course CS 329S: Machine Learning Systems Design:

When choosing features for your models you might want to consider the trade-off between the performance and stability of the feature.

A feature can be really good for the accuracy of the model but it might change or deteriorate quickly so you would need to train your model more often.

Chip gives the example of a model predicting if a user is going to download an app from the app store. We could be tempted to use the app ranking information to perform this task. But app rankings change very frequently making this feature prompt to shift. We could create buckets of 0-10, 10-100, 100-1000, etc, and use this as a feature instead.

Now that we understand all the cards we can play to fight the data drift that is causing the model degradation issue. We can take an informed decision and start our fix.

In this case, we were lucky the company followed the best practices when it comes to building machine learning systems. And had an ML monitoring system in place. If there are no indications of concept drift a good way to address the data drift in our initial story is to collect more data from the problematic distribution and retrain the model with the old + new data. As well as making a more robust test set.

Check out Failure Is Not an Option: How to Prevent Your ML Model From Degradation to read about a real case example of when the lack of effective ML monitoring affected a business where it most hurts 💸

Worried that nobody is babysitting your ML models? Check out our open-source tool to detect silent model failures before is too late. And leave us a ⭐

.avif)